Artificial intelligence – real threats or groundless fears?

The world’s greatest thinkers, including the author Stanisław Lem, the Nobel prize winners Stephen Hawking and Frank Wilczek, and the mathematician John McCarthy, have warned against the rising threat from intelligent machines. The threat isn’t only about humans losing their jobs to machines that provide services or manufacture goods, but also about the survival of the human civilization. Should we be worried?

Regardless of the alarm bells sounded by scientists and philosophers, I wonder what artificial intelligence has to offer to society, businesses and our civilization? Will machines ever be able to compete with people intellectually? Will machines ever pose a real danger to humans? Before attempting to answer these questions, it’s useful to try and define what artificial intelligence is.

Defining artificial intelligence

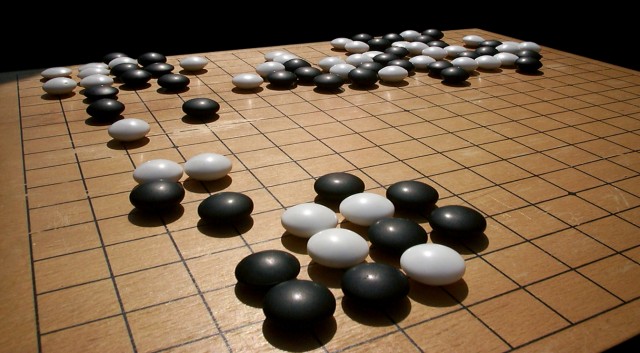

To begin with, one should distinguish robots, which are simple automatons, from artificial intelligence. While robots are mechanical machines designed to repeat a large but limited number of events in a well-defined environment, artificial intelligence has the ability to self-learn. Intelligence is not only about performing complex tasks even if very complicated. The distinguishing feature of AI is learning and deciding when, where and how to perform a task. This precisely is what the DeepMind AlphaGo project was about. It succeeded in achieving things that have never been done before. The machine was programmed to play the game of Go. It learned all the master strategies, stored them in its enormous database and ultimately learned how to win. The learning process involved playing thousands of matches against people and then millions against another machine of its kind (which was the previous model). DeepMind learned how to be a master. The machine learned from one game to the next playing against a master, as observers have confirmed.

Artificial intelligence in the real world

Artificial intelligence is mostly used to analyze large quantities of data. It is helpful in a range of activities including weather forecasting, speech recognition and real-time translation, as well as forecasting the need to repair telecommunication and power networks based on the probability of specific events happening. AI does more than mere calculations. For example, its soft skills can be applied in candidate selection for specific jobs. AI matches people with tasks by assessing their competencies, preferences, dedication and emotional status. All these characteristics are difficult to capture and elude simple mathematical analyses.

It isn’t hard to picture a world in which machines, equipped with advanced speech recognition tools, handle customer complaints and even conduct entire conversations. The big question is whether people would feel comfortable talking to such machines. Will they miss the empathy and understanding that a human interlocutor can provide? It’s worth remembering that to date no machine has passed the famous Turing test in which a human determines whether a conversation is conducted by another person or a machine capable of using natural language. The test is to prove that a machine is capable of human-like thinking. It is considered as passed if a human is unable to tell whether a speaker is a machine. However, artificial intelligence is making constant headway. In 2011, the CleverBot program fooled more than 59% of its interlocutors who were convinced they were dealing with a real person.

However, technology is susceptible to abuse. This has been demonstrated by controversies surrounding Tay, a chatbot with elements of artificial intelligence based on a Microsoft’s neural network. Within just 24 hours, Microsoft had to shut Tay down after a “coordinated attack by a subset of people” explored the chatbot’s ability to learn and, in the course of simulated debates with humans, taught it to exhibit racist behavior.

If used properly though, artificial intelligence has the potential to produce numerous benefits. It can help make decisions, design new items or analyze complex events. However despite such upsides, I am confident it will never replace humans. Never. This is because people have traits that artificial intelligence will never possess: unpredictability, lack of logic and emotions. But, then again, is it really never?

Related articles:

– Artificial Intelligence as a foundation for key technologies

– End of the world we know, welcome to the digital reality

– Blockchain has a potential to upend the key pillars of our society

– The brain – the device that becomes obsolete

– Augmented Reality. Seeing more than just a Pokemon

Game of GO

TomK

We should talk about fundamental change of education system not about taxing robots. The problem is not robotization but that after 16 Y of hard work at school our kids will have to compete with robots as they learn there only what machine can easly do after 2 weeks of being programmed !

Adam Spark Two

I’m not scared of general AI. I’m scared of so called automated weapons systems that can target and kill people without intervention. So, perhaps a drone where you could select and 1km x 1km square and order it to kill all humans within the area, which could then deploy high resolution cameras, image recognition and conventional weapons.

I’m not sure how something like this would actually be a systemic threat to Western countries, which are already protected by nuclear weapons. A country like North Korea having nuclear weapons is much more of a threat than it having automated weapons.

But if these systems are developed, and become generally acceptable, it raises enormous moral questions about ongoing warfare and perhaps even control of civilizans. If the West developed and sold technology like this, dictatorial governments would be almost impossible to overthrow, with automated sentries around their palaces and drones that could be deployed at the touch of a single button to disperse protests. This is not a happy vision of the future.

Mac McFisher

Kill switches on humans are possible, they are possible today, who have implants such as heart pacemakers, these people can be killed remotely, by wireless connection. And other implants that inject substances, such as insulin, into blood, which they can make compulsory. The only obstacle is to have a legal basis that allows to do so, and exactly that are they trying to do now. And they justify that by AI, using unscientific arguments. I think all AI people must be against that, and protest against that.

johnbuzz3

You can have the best of all worlds in the future of you want. Having synthetic body and high density DNA brain housed within that synthetic body, and your mind is constantly synced to the cloud in the event of unforseen accident. Future is so bright.

Norbert Biedrzycki

Worldwide, 1.1bn workers and $15.8 trillion in wages are associated with activities technically automatable today

John McLean

Sadly the path to general artificial intelligence is long and complex. The problem is super complex and requires solving many sub problems of high complexity also. I hope that a leap in understanding will be made that will propel us farther then we expect. But at the moment, the work seems to be mostly incremental.

Norbert Biedrzycki

What do you mean by solving only intremantal problems? What about 2016 announcement of quantum computers impacting statistical analysis key for deep learning?

CabbH

Using a real life example show how an inference engine / expert system would make a better decision than a traditional rules based engine.

Its actually harder than it sounds… its easy to share why technically its a better way to come to a given goal however in practice its just as possible to do it with Decision Trees.

The example I was thinking of was choosing a pet and showing how a decision tree could conclude a Lion is a good pet because it cannot take into account uncertainty.

Anyhow, I see nothing on the Net that shares why AI would make a better decision than an traditional rules engine.

Mac McFisher

Kill switches on humans are possible, they are possible today, who have implants such as heart pacemakers, these people can be killed remotely, by wireless connection. And other implants that inject substances, such as insulin, into blood, which they can make compulsory. The only obstacle is to have a legal basis that allows to do so, and exactly that are they trying to do now. And they justify that by AI, using unscientific arguments. I think all AI people must be against that, and protest against that.

Karel Doomm2

A growing number of areas of our daily lives are increasingly affected by robotics. In order to address this reality and to ensure that robots are and will remain in the service of humans, we urgently need to create legal framework for their behavior

Norbert Biedrzycki

EU is working on this now. I’m checking the progress on the constant basis, since the outcome would impact all of us. What about Asimov robot law?

DDonovan

This is cool. https://www.sciencedaily.com/releases/2016/12/161206103533.htm

TommyG

Example: Fan Hui, the European Go champion defeated by Google’s program is not “the world’s best GO player.” He is ranked world wide in 600 – 700 th place and is only an 8 level player, there are, according to one source, 10 levels above him. If it helps get the facts straight I’m all for AI.

johnbuzz3

That’s some scary…

It’s funny that even being aware of this we are still effectively making the thing that has the power to destroy humanity on a whim. Is that smart? Bur from the other hand If we don’t use progress and intelligence for our species we will then become extinct. Never as a civiliation we’ve been this far, and if we don’t go further, we will use our resources fully. There are far too many people on our planet for us to stop now.

Mac McFisher

With a lot of countries having nuclear weapons, and many more countries in the future will acquiring them in the future. With this said, espionage from the spy agencies around the world will be, and is, moving into high gear like never before, in each country!

To have a kill switch for a counters AI’s, that become dependant on, is and will be high priority target!

TonyHor

This is already happening. Artificial Intelligence changes the way we work, we live. In my accounting department we’re handling twice as many clients with half as many staff as we were 10 years ago. And these days 8 out of the 10 of us remaining are only part time, with only 3 of the whole team fully qualified now.

All because of improvements in remote working, scanning and record keeping and better accountancy software. I’m basically employed to meet with clients and check any errors.

And occasionally I answer the phones.

Check Batin

This all assumes that for example the decision making process would be similar as in human driver cases. It’s not. When humans drive prevention doesn’t have a very significant role. We can make it to have a significant role in autonomous driving situations. AI can and will observe situations which a human driver can never observe or learn.

DDonovan

Try this: http://futureoflife.org/background/benefits-risks-of-artificial-intelligence/

AI might become a risk, two scenarios most likely:

The AI is programmed to do something devastating: Autonomous weapons are artificial intelligence systems that are programmed to kill. In the hands of the wrong person, these weapons could easily cause mass casualties. Moreover, an AI arms race could inadvertently lead to an AI war that also results in mass casualties.

The AI is programmed to do something beneficial, but it develops a destructive method for achieving its goal: This can happen whenever we fail to fully align the AI’s goals with ours, which is strikingly difficult.

Norbert Biedrzycki

See lot of good examples how robots could help humans even now: https://mic.com/articles/118712/9-awesome-robots-that-are-helping-to-save-the-world#.8SA0OFm1O

Norbert Biedrzycki

Time to implement old good three laws of robotics?

Wiki: The Three Laws of Robotics (often shortened to The Three Laws or known as Asimov’s Laws) are a set of rules devised by the science fiction author Isaac Asimov. The rules were introduced in his 1942 short story “Runaround”, although they had been foreshadowed in a few earlier stories. The Three Laws, quoted as being from the “Handbook of Robotics, 56th Edition, 2058 A.D.”, are:

1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2. A robot must obey the orders given it [sic] by human beings except where such orders would conflict with the First Law.

3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

Asimov also added a fourth, or zeroth law, to precede the others:

0. A robot may not harm humanity, or, by inaction, allow humanity to come to harm.

CaffD

This article was interesting. It is true we need to think of the future, the future for the next generation. Developing courses to benefit the future. I read a lot of AI articles and it is frightening how quickly this area of science is developing. At least your article opens a few more minds.