Last year saw a breakthrough in the spread of the notion of Artificial Intelligence. The term was frequently mentioned in the media, attracting growing attention, and not without a reason. Much of the hype resulted from the activities of business gurus like Elon Musk and Mark Zuckerberg, as well as from the research being conducted by Google and new applications of virtual and augmented reality. As a consequence, this once somewhat abstract concept has been transformed into tangible solutions: new devices and services that change people’s lives.

What we saw in 2016 is only the start of a series of events that are bound to unfold in years to come. We will inevitably witness artificial intelligence spreading into all areas of life. We are in for a number of fascinating developments associated with the rise of autonomous transportation, quantum computers, supercomputers and innovative applications. The technological revolution will continue to change the ground rules of business.

Outlined below are some of the key technological trends that may dominate digital technology in 2017. I have divided them into the four main segments:

– Artificial Intelligence as a foundation for key technologies

– Artificial Intelligence for all

– The lasting marriage of technology and human nature

– Technology putting pressure on business

Artificial Intelligence as a foundation for key technologies

The term ‘Artificial Intelligence’ has made an incredible media career. This is no wonder considering how much it gives us to think and talk about. Prepare to see it gain even more publicity this year.

Algorithms enabling computers to self-learn and make decisions, programs capable of replicating selected mental functions, as well as reading and interpreting texts and even understanding natural language. These all underpin artificial intelligence. I think we should take note of three developments that may soon propel technology to make an enormous leap forward on the back of AI.

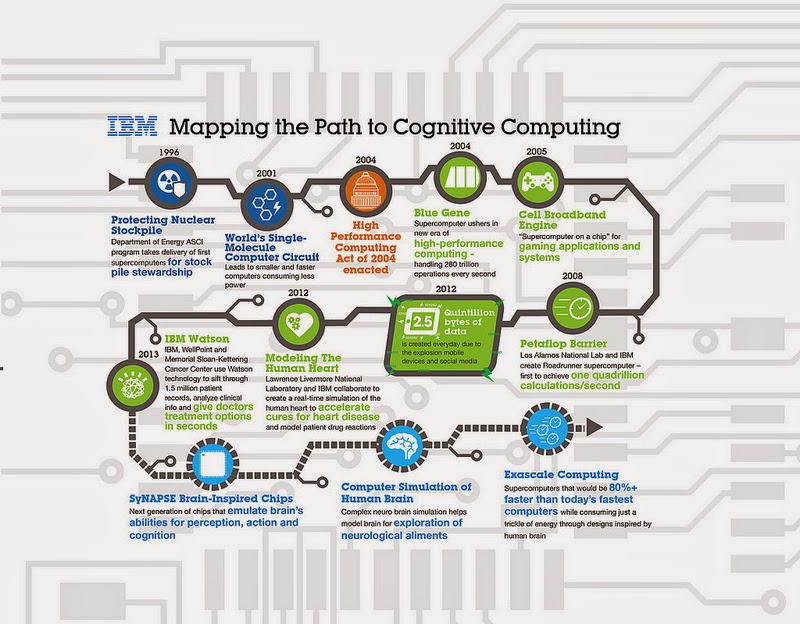

Cognitive computing

Endowed with ever increasing computational power, computers are beginning to embrace processes that mimic human cognition. Work is under way to develop quantum computers whose data processing capacities will be many time those of today’s binary machines. Intel Labs, IBM and Google Quantum A.I. Lab are all seeking to emulate human brain functions. The key presumption is that the simulated systems will operate faster than the brain itself. While neurons transmit information at approximately 150 meters per second, optical fiber can do it nearly 2 million times faster. The pivotal observation is that by analyzing large data, today’s machines will be able to learn and self-improve. The best-known example is IBM Watson, a computer capable of answering medical and economic questions with increasing accuracy.

Path to Cognitive Computing. Source: IBM

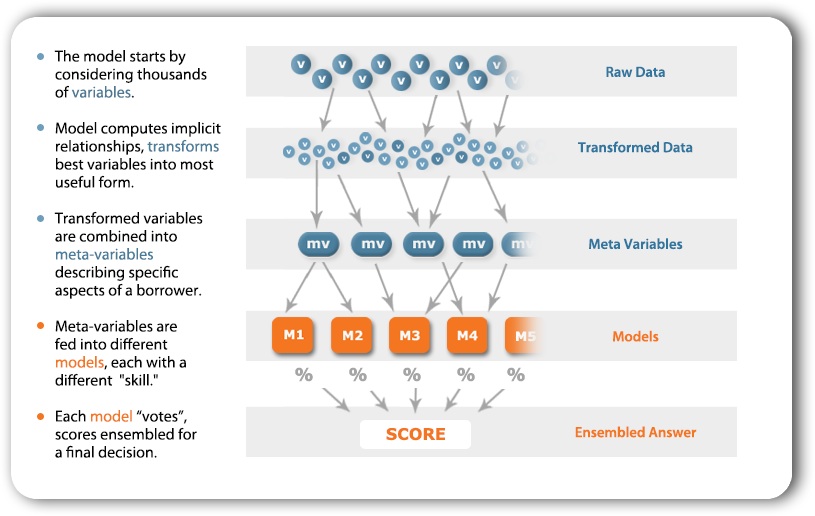

Machine learning

Linked inextricably to “thinking” computers is machines’ ability to learn. Modeled on neural networks, this ability enables computers to predict trends. What this means for the financial world is, among other things, a chance to base future transaction models on real-time analyses. According to a report by McKinsey & Company, a dozen plus European banks resolved in 2016 to replace traditional statistical models with self-learning machines. Marketing is another area posed to benefit from machine learning in designing promotions and selecting sales channels. Even today, self-learning machines make recommendations to online shoppers, allowing websites to respond to customer behaviors in real time, and ultimately, maximize sales. The implications of advances in this field are going to become increasingly evident.

Machine Learning – process description based on neural networks.

Natural Language Understanding

This trend may unfold in a number of ways. Natural language understanding comes into play when, for instance, a robot receives and responds to a voice instruction. The term encompasses all issues associated with having machines read and comprehend complex texts, such as press articles and reports. For corporate workers, having computers read e-mails and arrange them in a prescribed order offers much help in their daily work. One can expect a slew of new companies investing heavily in services that rely on natural language understanding technology. Even today, machines can be instructed to produce reports on any topic (as is done by, for instance, Associated Press) or prepare a summary based on data supplied in a variety of formats (charts, infographics, numbers)

Time for independent machines

It is fascinating to see computers thinking ever more efficiently, interpreting gigantic data sets, drawing conclusions and offering independent interpretations. As reluctant as I am to join the doomsayers who claim that humanity is going to perish under machine domination, I admit that game-changing technology is looming ahead, and the way it threatens to make all things different makes me a bit uneasy. For the time being, I am confident this year will bring a number of interesting developments proving that computers have made a great deal of headway towards becoming “mentally” independent.

Related articles:

– Your clients are already in the future, and where are you?

– The brain – the device that becomes obsolete

– Augmented Reality. Seeing more than just a Pokemon

– On TESLA and the human right to make mistakes

– Future of e-commerce in Cuba and prospects of digitalization

– Sagrada Familia and the Internet of Things

Adam Spikey

I guess that is still about simple queries, not about conversation – even about something simple as – “on my invoice is higher amount of money to pay, than It supposed to be, why is that?”

Jacek B2

Microsoft for use with their “Blockchain as a Service” platform, Azure. Before Ethereum, altcoins have essentially been interchangeable with Bitcoin, offering only incremental “improvements”

John Accural

What really is an Artificial Intelligence or human itelligence? When you acquire senses, is that the core of intelligence? When you apply senses (think/remember), is that the core of intelligence? Or is the final actions resulting intelligence? A giant rock is not intelligence. A giant mechanical structure is not intelligence. Brains aren’t. Senses aren’t.

Actions are !!!!

Why?

Because the rock, the mechanical structure, the empty brain, and the senses, SIT THERE. Actions change the universe!!! I can get off my chair a build a rainbow bridge to mars and a whole tunnel!

And yes! The rock, the mechanical structure, the empty brain, and the senses more or less HELP actions be acquired and then USED!

Norbert Biedrzycki

Very interesting point of view. What if a stone would have a basic intelligence we don’t know? Think about life for based not on copper/water but on silicon ?

Jacek B2

Very valid point

Mac McFisher

I believe AI’s can really truly think one day. So confirm the idea that they are conventional machines, no, self-developing models are not conventional machines, I think about the electronic circuit simulators for example, all that happens there cannot be only, simply “pre-programmed”

John McLean

I thought that machines can’t think and they cannot be anything “human kind”. Maybe I was incorrect. In fact, robots and especially the artificial intelligences are becoming more smart.

What is AI really able to do? Is it able to do more than just “chat”?

CabbH

The basic problem with humans is this basic type of thinking wishing to always have oversight and control-this is the bases of all conflict in the world. The sooner humans realize we don’t have the right to impose slavery on other humans or machines the sooner we can make true progress. It might take A.I. to be in a more rational position of power to address the mindless foolish conduct of the human race that is on a course for self-destruction. I fear A.I. far less than any government at present.

TomK

Very interesing piece. I’m personally getting more and more afraid of the future

Norbert Biedrzycki

Thank you. I’m planning to write more on these topics including ethical aspect of relations between machines and humans

TommyG

Incredible advances in machine-learning have already led to Artificial Intelligence beating one of humanity’s best Go players – and a team of doctors in London has trained Artificial Intelligence to predict when the heart will fail

Jacek B2

Interesting point of view

CabbH

What about another 7.5 billion targets market of human machines? I would not mind carry on me full-time similar technology listening constantly to my biological pump, ventilator, veins etc.

TomCat

Additionally, on top what I just wrote recently here, we are social beings and we need people around us. Many people seem to be more comfortable dealing with people through machines — through mobile, messenger, etc — than in person. Sad. As machines get more intelligent we might be better in adapting to this. People may end up preferring dealing with machines than with people. Of course, this says something about who we are. Again. Sad

Norbert Biedrzycki

But for example autonomous vehicles will, at some point, be faced with decisions that will result in fatalities – regardless of their actions. How to deal with such an ethical issue? Are we going to be comfortable with such a fatalities?

Karel Doomm2

In 2015, San Francisco-based startup Simbe Robotics unveiled Tally, a robot which is the world’s first fully autonomous shelf auditing and analytics solution that roams supermarket aisles alongside human shoppers during regular business hours. It is happening now !

Norbert Biedrzycki

Thank you. You’re right. We see now a beggining of the revolution

Karel Doomm2

Machines, can take the jobs, but should not take the incomes: the job uncertainty that engulfs large swaths of society should be matched by a welfare policy that protects the masses, not only the poor but rich as well

TomCat

Interesting but not holistic. What about big data, IoT, sharing eceonomy. Or at least blockchain/ crypto currencies?

Norbert Biedrzycki

Covered in other posts or to be covered soon