About machine consciousness

In reply to a journalist’s question on whether machines will ever be able to feel, Oren Etzioni, co-founder and CEO of Allen Institute for Artificial Intelligence, an organization established two years ago by Microsoft co-founder Paul G. Allen, said: “The short answer is no. An expanded one is: no, they won’t – people have an overblown perception of what computers can do in this day and age”. Another notable quote came from Stuart J. Russel, a scientist credited with major contributions to AI research, an author of many publications on the subject: “The biggest obstacle to the development of AI is we have absolutely no idea how the brain produces consciousness. If you gave me a trillion dollars to build a sentient or conscious machine, I would give it back. I don’t get the sense we are any closer to understanding how human consciousness works than we were 50 years ago”. The rise of machine consciousness?

Why don’t we look at some facts that are undeniable and that will offer an undistorted view of where we stand on developing Artificial Intelligence. Is the experts’ skepticism justified?

Enthusiasts riding the wave

Not a month goes by without reports on global corporations such as Google, Amazon, IBM, Facebook, Apple, Microsoft and Samsung investing in AI and the proliferation of start-ups developing ultra-modern smart technologies. The trend is as popular with mainstream media as it is with niche technology portals. It is accompanied by an ongoing debate on the possible consequences of the spread of artificial intelligence. The buffs are quick to enumerate the benefits they expect to see within a decade or two, including computers responding to human facial expressions, emotions and voice, self-improving computer systems using successive dataset operations (machine learning), implantable nanobots capable of seeking out and destroying cancer cells in human bodies, computer decision support systems; smart technologies in our homes and autonomous vehicles. Even today, the effort to prolong human life, the strife for greater data processing power and the ongoing personalization of personal computers provide a fuel of sorts for global business and are no longer the domain of Hollywood directors who have for years exploited AI.

Money drives intelligence

Continued research in the field and greater funding will inevitably result in a gradual commercialization of AI. Within the horizon of just a few years, the involvement of big corporations can be expected to produce revolutionary changes in both medicine and business. Forecasting agencies predict a quantum leap in the next five years. During this time, the funds invested in AI-related projects will grow by tens of percent while fascination with artificial intelligence is posed to snowball. According to the CB Insights, in 2015 alone, the global financial market saw the arrival of approximately 300 new large companies whose mission statements featured such keywords as artificial intelligence, machine learning and neural networks. According to a report by the market research agency TechSci Research, the United States AI market will grow by 75 percent during 2016 – 2021. The money will go to making AI better adapted for consumer electronic devices, scientific research, autonomous cars and R&D activities in the healthcare industry. On the other hand, BCC Research, a company specializing in technology markets research, projects the global market for smart machines (neurocomputers, expert systems, autonomous robots, intelligent assistants) to grow to US$ 15.3 billion by 2019, with an annual growth rate of 19.7 percent. Without a doubt, this is the fastest-growing segment of the technology industry.

Intellectual backing

The global drive in business would not be possible without a research backing. Today’s AI investment boom would never happen without the involvement of science and technology institutes, research organizations, technology hubs and non-profits. The afore-mentioned Allen Institute for Artificial Intelligence employs dozens of scholars and technology experts in various fields. Its mission, as proclaimed on the Institute’s website, is: “to contribute to humanity through high-impact AI research and engineering”. Besides research institutes, artificial intelligence projects receive the financial support of technology corporations which literally vie to outdo one another in launching new high technology projects. The last four years have brought about a breakthrough. Even in 2012, Google has engaged in AI ventures. In 2014, it announced an investment of hundreds of millions of dollars in the start-up Deep Mind. In 2014, Mark Zuckerberg, Elon Musk and Ashton Kutcher joined forces to invest substantial amounts in Vicarious FPC, a company dedicated exclusively to the most visionary AI projects. As declared in its mission, Vicarious FPC aims to “build a unified algorithmic architecture to achieve human-level intelligence in vision, language and motor control”. One of Facebook’s strategic objectives is to create a powerful data processing system and develop a computer-based facial recognition technology. Huge resources (money, intellectual capital and human labor) have been deployed to develop AI. All this, in my view, may soon put an equivalent of a simple AI in the laboratories of large companies and research institutes.

The time of intelligent change

In view of the projected investment in research, it is interesting to look at some of the forecasted areas of AI development. Note the most recent report by the analytical company Gartner on AI. Here are a few examples. According to Gartner, within the next few years, IT systems will be able to make autonomous economic decisions. By 2020, a staggering 5 percent of the world’s economic transactions will be carried out with the use of installed software algorithms with the capacity to draw conclusions from data sets entered into computers. According to Gartner, by 2018, an astounding 20 percent of business content and information will be authored and published by computers. We are therefore referring to intelligent or nearly intelligent processing of data in various categories. Speaking of which, note the market presence of the companies which even today offer systems that autonomously convert data into reports that are meaningful for humans. One of them is Yseop, a provider of a service that may soon revolutionize the jobs of accountants, stock exchange analysts, business strategists and managers. With the help of a simple interface, the user may enter numbers, charts and infographics into a computer. A machine then will automatically compile, collate and process the data. Even today, the Associated Press agency relies on computers that autonomously create reports that are then used by its journalists. Their quality is very high and only some need to be edited by humans.

Machines take to science

In its predictions about the nearest future, Gartner notes especially one trend of great significance for the further development of AI systems: “machine learning”. One of the most fundamental questions asked by AI researchers is how to create AI systems that will autonomously improve their performance by learning from experience. How to apply the rules that govern human learning in developing computers? This brings us to what experts believe to be the biggest challenge faced by AI developers, an idea that is as fascinating as it is controversial: how to create advanced systems that will replace humans in decision making. One can immediately see that this is about more than just automating repetitive processes, which has already been done in business and industry. We are referring to machines whose algorithms recognize patterns, predict future results and apply that knowledge in decision making. A practical answer to this challenge will may be to use a technology based on artificial neural networks that will mimic the human brain (the quantum computing trend). Picture a machine capable of analyzing data and drawing conclusions that is employed in medicine. Based on parameter analysis, computers would diagnose health conditions, detect anomalies and predict diseases. To quote Oren Etzioni again: ”What if a cure for intractable cancer is hidden within the tedious reports on thousands of clinical studies? AI will be able to read and – more importantly – understand scientific text. These AI readers will be able to connect the dots between disparate studies to identify novel hypotheses and to suggest experiments which would otherwise be missed”.

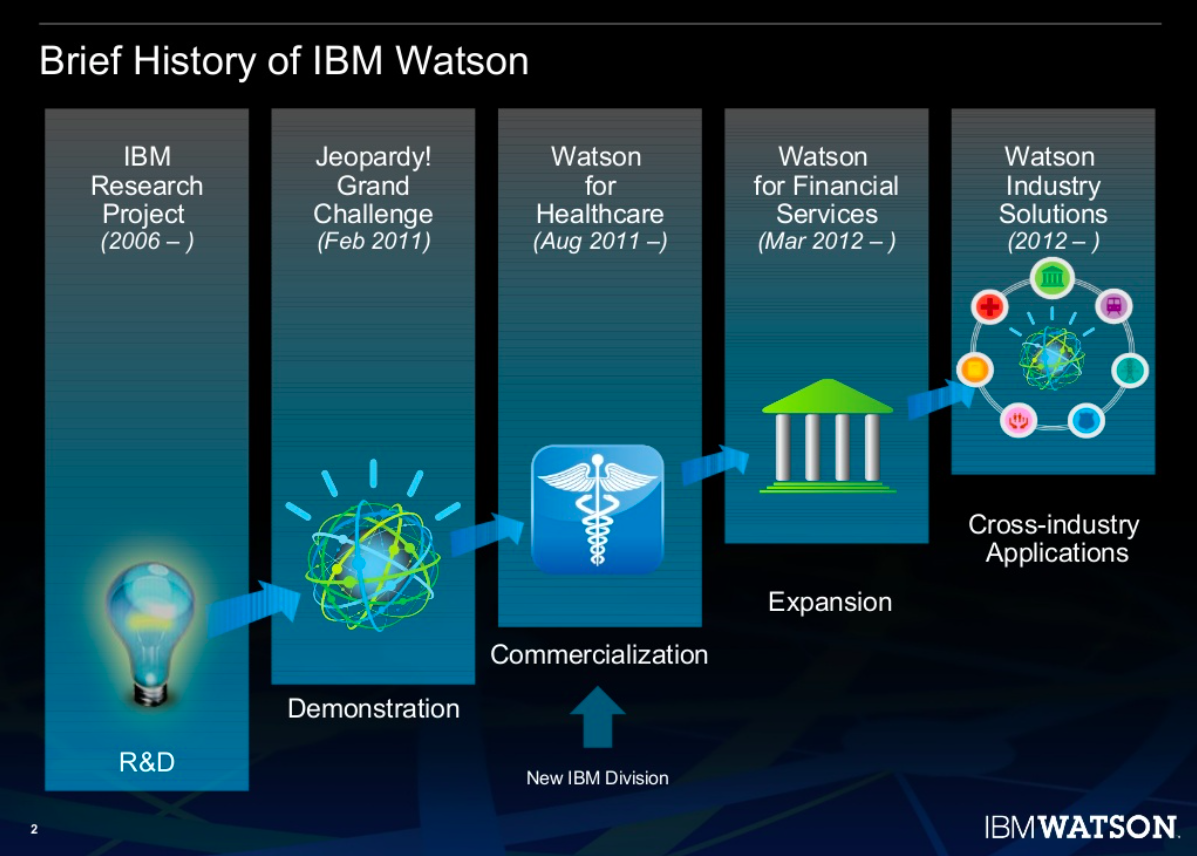

Can you hear me, Mr. Watson?

Another serious matter that is central for AI developers is that of natural language processing. Many managers believe that computers that emulate the ability to understand human language may revolutionize the drive to achieve integration and collaboration between human and machine intelligence. Google claims that machines currently handle 20 percent of telephone inquiries from customers. Research in the field aims to develop systems that will dialogue with people rather than responding to simple demands. Some add that the breakthrough that is ca. 20 years away will be for computers to learn to fully recognize human facial expressions and read human emotions. What is certain is that major advances in the field are seen even today, some of them right under our noses. We are often challenged to assess their scale and significance for the future. The IBM computer Watson (2880 core, 15 TB of RAM) has been made for the express purpose of responding to questions asked in natural language. The machine relies on natural language processing for its standard operations. To make the answers possible, the computer has access to a database of millions of pages of various content, including dictionaries and encyclopedias and is programmed to use hundreds of parallel algorithms to find the right answer. With this mechanism, it can analyze huge data sets from various areas such as business, economics and medicine. By communicating with humans through voice, Watson “understands” the questions asked and problems presented, gathers successive data and “learns” from them in keeping with the idea of machine learning.

I am your assistant

As computers “acquire” intelligence, they learn to communicate with people in an increasingly “human-like” manner. Their responses will be a function of their ability to read and process diverse data. The pioneering research which has led to the development of IBM Watson will be disseminated and commercialized. It is nearly certain that autonomous assistants will become very popular in the nearest future. These applications will help us acquire knowledge and make decisions in our day-to-day personal lives. Even today, it takes little imagination to picture that happening. For a number of years now, iPhone users have enjoyed the company of Siri which responds through voice to various simple questions about the time of day, the weather, the day’s date as well as finance, music, e-mail account content and smartphone contacts. Similar projects which, although continually improved, are already available for individual users, include Amazon Echo which also relies on the natural language processing mechanism.

The optimists, realists and pessimists

AI continues to evoke mixed feelings. Enthusiasm meets fear, as fueled by filmmakers, writers and wary futurologists. Facebook founder Mark Zuckerberg ranks among the technological optimists and business pragmatists. He said that “AI will reach a point where it will benefit companies large and small. We are working on AI because we believe that more intelligent services will be more useful”. His sober approach is not shared by all technology market players. According to a report prepared for Baker & McKenzie, out of 424 financial specialists, 76 percent believe that financial oversight authorities are ill-prepared to work with new AI software whereas 47 percent are doubtful about their own organizations’ ability to understand the risk inherent in using AI. The respondents were found to believe that dependence on artificial intelligence will bring about a cut in employment. This statement conceals a whole spectrum of emotions and views on AI. 16 years ago, Bill Joy shared his thoughts on AI in a legendary and much-quoted article “Why the Future Doesn’t Need Us” published in Wired magazine. Many of its author’s reflections can now be seen as both extreme pessimism and incisiveness. Next to all of the benefits that Joy saw in the development of AI, he also shared some fears. He said: “We have yet to come to terms with the fact that the most compelling 21st century technologies – robotics, genetic engineering and nanotechnology – pose a different threat than the technologies that have come before. Specifically, robots, engineered organisms and nanobots share a dangerous amplifying factor: they can self-replicate.”

What does the future hold?

Well, the issue of AI appears to be so complex that both extreme optimists and pessimists still stand a good chance of winning the battle of opinions on AI and its role in our lives and perhaps in the life of our entire species. One thing is certain: we are living in a time in which the notion of progress and benefits for the human race need to be redefined. The categories developed ages ago may no longer suffice to grasp reality and understand our place in the age of personal assistants, computers, autonomous decision-makers and nanobots that roam around within our bodies.

Related Articles:

– Artificial Intelligence as a foundation for key technologies

– Artificial Intelligence for all

– The lasting marriage of technology and human nature

– Technology putting pressure on business

– The brain – the device that becomes obsolete

– Augmented Reality. Seeing more than just a Pokemon

– Artificial Intelligence – real threats or groundless fears?

– On TESLA and the human right to make mistakes

– Modern technologies, old fears: will robots take our jobs?

Brief history of IBM Watson (source: IBM)

Guang Go Jin Huan

I think as long as everyone involved is aware of the impediments and deficiencies of these algorithms and models, we should be fine. The algo maybe did not catch the recent virus because it was such a rare event in the data, yet it still might outperform most of the GPs by giving more accurate diagnosis.

Let us take the unfortunate cases of autonomous driving, which are horrible of cuorse, yet in 2,3 years we will potentially have the situation that AV produces much less severe accidents and hopefully less deaths.The development cycle of innovative tech is not deniable for AI. But it is also good that some for of awakening happens, it will put the expectations to more realistic ones, people will fear it less because they have seen it in many aspects of life and know its drawbacks.

Adam Spikey

brace yourselves- they are coming 🙂

And99rew

I’m pretty sure that the ratio between job lossed to automation and jobs created by automation is not 1:1. I would respectfully suggest that the losses will significantly outpace the creations.

TomK

The problem is not robotization but that after 16 Y of hard work at school our kids will have to compete with robots as they learn there only what machine can easly do after 2 weeks of being programmed !

Karel Doomm2

I really enjoyed reading your article. Truly inspiring, as I am currently diving into the world of AI.

Adam Spark Two

Musk, Wozniak and Hawking urge ban on AI and autonomous weapons: Over 1,000 high-profile artificial intelligence experts and leading researchers have signed an open letter warning of a “military artificial intelligence arms race” and calling for a ban on “offensive autonomous weapons”. If this is not scarry …

CaffD

AI in use today: https://www.forbes.com/sites/robertadams/2017/01/10/10-powerful-examples-of-artificial-intelligence-in-use-today/#27d6720b420d

TonyHor

A lot of good examples. Right. But are all of them really aplicable? In the future yes. potentially

Check Batin

Cyber and Drone Attacks May Change Warfare More Than the Machine Gun — Battles fought by machines and on the Internet might change the moral calculus of how and when we fight.

TomCat

We humans (our early ancestors) have evolved emotions for social requirements and physical requirements. For example fear, we evolved and gained this emotion, it helped us to survive when we saw a sabertooth tiger. Our brains create emotions that cause us to react in certain ways. They only came aroundas they gave us an advantage.

Karel Doomm2

I’m not sure about the technological advancements, but IBM Watson has gone forward and signed agreements with NASA to provide a modified version of Watson to the Agency. This is the first of its kind and sets a precedent for AI in NASA.

Would be interesting to see results there

John McLean

While we have seen much growth with AI back in 2016, we’ll see further growth in 2017. Back in 2016, we learned that Amazon’s Alexa, which manifests artificial intelligence in the form of being able to speak human language, is now present in over five million homes. You can ask Alexa about the weather or tell her to order you a taxi, and she’ll respond. This means that last year, AI hit mainstream adoption. http://bit.ly/2qVbril

John Accural

I watched a very interesting program about various theories about the start of the universe, string theory, expansion, the big bang, parallel universes – you know the stuff. Out of all the people presented on the show, Kaku seemed to have the best way of explaining things, and you could always tell it is a passion for him. He is a real futurist with a strong point of view on Artificial Intelligence

Michio Kaku – ‘Michio Kaku is an American theoretical physicist, futurist, and popularizer of science.

John McLean

Check this one https://www.theguardian.com/technology/2017/jan/15/driverless-cars-12-things-you-need-to-know all about automation in transportation

TomHarber

I love this guy. Scientist in a field of quantum maths, physics. Futurist. His books on world development and technology predictions are masterpieces

DDonovan

Today, search engines are good at processing or indexing but can understand little about the questions asked of them. But that’s it. I do not fear AI.

Apple’s voice assistant, Siri, is cute but really does not understand what a person is saying – it has a small library of prepared answers. The Watson supercomputer can beat anyone at Mastermind but it would not get very far at a poker table. But again – this is all what they can do.

orlando-florida

Ha! I would play poker with Siri, rather than have a telemarketer version call me. There is no guarantee that any of them would be great telemarketers. 🙂 Although, I can see the script now. Or…. maybe even an investment scam..The Machine would be part Troy McClure from the Simpsons, part Siri. You might remember me as a former High Finance real estate mogul.

https://en.wikipedia.org/wiki/Troy_McClure

DDonovan

Don here – No I do not remember you. Apologies 🙂 But I would rather prefer Siri, or even HAL from Space Odyssey

johnbuzz3

You can have the best of all worlds in the future of you want. Having synthetic body and high density DNA brain housed within that synthetic body, and your mind is constantly synced to the cloud in the event of unforseen accident. Future is so bright.

Mac McFisher

Ultimate question is that do we believe that AI will ever have a potential to be harmful or have the ability to be a threat to the world? What threat, a danger that it can enslave all people, can even humans completely enslave all people, can anyone? And people, people can use AI to fight against AI And if that is necessary, doubting that they may not be capable of is doubting in any worth of the human nature. But so far, the threat has not been AI itself, but the people who use AI against other people, and so it seems to remain, in spite that these people may say that AI is responsible and the threat is AI, just so that no one can see that these people are the threat.

johnbuzz3

Mac – Of course there is the military industrial establishment, which, I’m sure, once AI becomes even more complex and viable as ‘thinking weoponry’, will become more of the threat to itself and it’s makers than anything ethical you and me would produce.

In the movie Red Mars, with good ols Val Kilmer, there’s a military AI bot named AMEE which seemed to me to be a viable, very possible creation that could be made very soon, relatively speaking. I would be a little afraid of something like that getting into the wrong hands. Or say the ‘spider AI’s’ in Minority Report being used by our wonderful ‘COPS’ mentality law enforcement officials…

Jacek B2

Forrester Research predicted a greater than 300% increase in investment in AI in 2017 compared with 2016. IDC estimated that this technology market will grow from $8 billion in 2016 to more than $47 billion in 2020.

TonyHor

The human brain is specifically designed to INSTANTLY DISCARD certain information the evolutionary process has deemed superfluous. AI in most cases involves computational assessment of everything. In thinking over that it is important to pre-filter the perception algo to disregard irrelevant data. People are way too more advanced to be replaced by AI

TomK

Way more advanced now but might not be the case in the future. Machines more sophisticated that us in logical thinking and speed of data processing. They cannot learn by themselfes. Yet. But machine learning era is happening right now

Jacek B2

Very valid point